Those who cannot remember the past are condemned to repeat it

The year that is just ending marked the 20th anniversary of the Piper Alpha disaster. A total of 167 men died when the North Sea oil platform exploded and caught fire in July 1988. The result was a major overhaul of offshore safety – and the near collapse of the London marine insurance market in a way which has echoes today.

Lloyd’s syndicates and companies in the London excess of loss (LMX) market had insured the programme that included the platform. Then, in a very soft market with cheap reinsurance, they laid off large proportions of the risk to the next syndicate or reinsurance company along the line. A number of underwriters who got involved were not even marine specialists, but wrote the programme as a means of topping up their premium income.

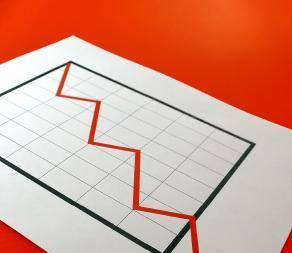

Piper Alpha was a $1.4 billion loss, the largest to that date for a man-made disaster and far more than the underwriters had thought likely. It climbed through the layers of reinsurance and retrocession to reach syndicates and companies that thought they were remote from the risk. As it did, underwriters found that they were exposed to the same loss multiple times through reinsurance programmes they had written for other players in the market.

The spiral collapsed. For some marine accounts, the excess of loss ratio for 1987-88 was over 1000%, meaning claims of more than 10 times the premium income, and those were the insurers who survived long enough afterwards to report their figures to what is now the International Underwriting Association (IUA). Others went in to run-off or withdrew from the market.

Today’s international banking crisis is the LMX spiral increased many times in size and geographical distribution. The underlying cause is the same: a loss of contact with the fundamental risk and ignorance of the accumulations of exposure. Some of the collateral causes are similar, too, such as weak regulation and asymmetries of knowledge.

In 1988, insurers did not have the mathematical and computer tools they have today to monitor their accumulations of risk; many of them did not use computers in their underwriting at all at that stage. The science of catastrophe modelling developed after Hurricane Andrew in 1992 sucked companies and capital out of the US insurance market.

Danger

“Piper Alpha was a $1.4 billion loss, the largest to that date for a man-made disaster.

Since then the development of computing power and mathematical techniques for modelling risk have advanced. Catastrophe modelling has probably made the insurance industry more disciplined because it has become more aware of its potential for loss than in 1988 or 1992. The risk remains, however, in over-reliance on mathematical engines, as the larger than expected losses from Hurricane Katrina in 2005 demonstrated to the insurance market.

Cat models are an attempt to make sense of highly complex natural systems, and so they are shot with uncertainty, as any cat modeller freely admits. There are also limits to their ability to represent the cascading impact of the largest events on the complex networks on which societies depend: another lesson from Hurricane Katrina.

We cannot reduce complex systems, such as weather, large communities or developed financial markets, to a bunch of algorithms that will answer all the questions and allow us to make money without danger. Lloyd’s introduced its realistic disaster scenarios, so underwriters would test their assumptions for just this reason.

Understanding that a low income family wants to become part of the property boom, whether or not it can really afford it, and that financial agents will take advantage of their lack of sophistication and weak controls to make money when they have little personal exposure should not be surprising. It is simply a variation on events that have happened many times before, and a matter of experience, not modelling. Likewise, in managing catastrophe risks, remembering disasters like Piper Alpha, should help us keep a perspective on the uncertainty of exceptional events.

In either case, the argument is not that we should avoid risk, but that it is dangerous to build complex super-structures on top of fragile foundations of assumptions which are unsustainable because they are too distant from the original risk.

Lessons learned is the clumsy phrase that now appears in post-disaster reports. The Spanish American philosopher George Santana put it more elegantly in the Life of Reason in 1906: “Those who cannot remember the past are condemned to repeat it.”

No comments yet